MedForge

Detects medically plausible image forgeries with localized reasoning, using the MedForge-90K benchmark and a Localize-then-Analyze detector. 通过 MedForge-90K 基准与先定位再分析的推理式检测器,对医学影像伪造进行定位、判别和解释。

Published at ACL 2026 and EMNLP 2025, I study trustworthy and multimodal AI for high-stakes healthcare, spanning medical deepfake detection, LLM detection, and medical image editing. 已发表 ACL 2026 与 EMNLP 2025 主会议论文,研究方向聚焦高风险医疗场景中的可信与多模态人工智能,覆盖医学深度伪造检测、大模型文本检测与医学图像编辑。

I am currently a second-year PhD student at the National University of Singapore, supervised by Prof. Mengling Feng.

Academic Service: Reviewer for ACL 2026, NeurIPS, IJCAI, and ACM TIST, with 10+ papers reviewed.

Research Interests: Trustworthy and Agentic LLM • Multi-modality Intelligence in Healthcare

Zhihui Chen, Kai He, Qingyuan Lei, Bin Pu, Jian Zhang, Yuling Xu, Mengling Feng# (# corresponding author)

Annual Meeting of the Association for Computational Linguistics (ACL) 2026 Main Conference" data-zh=" 主会议"> Main Conference

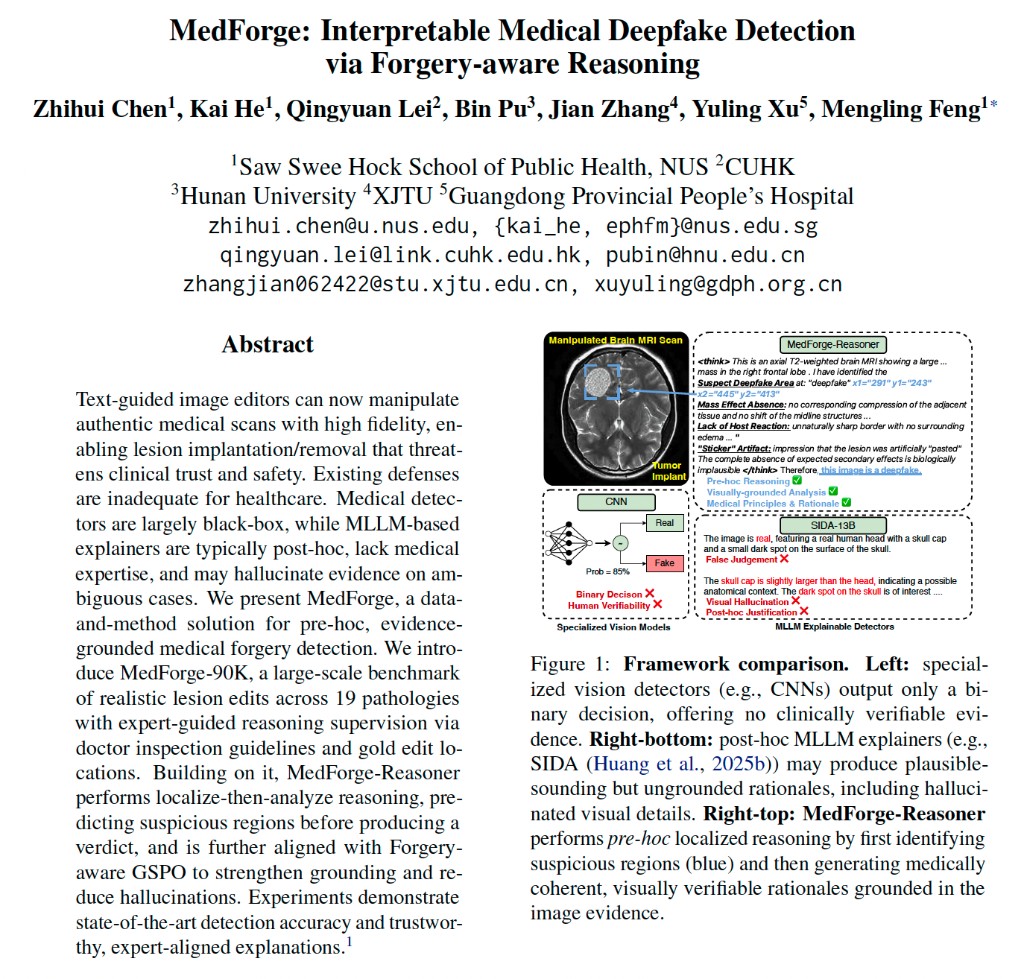

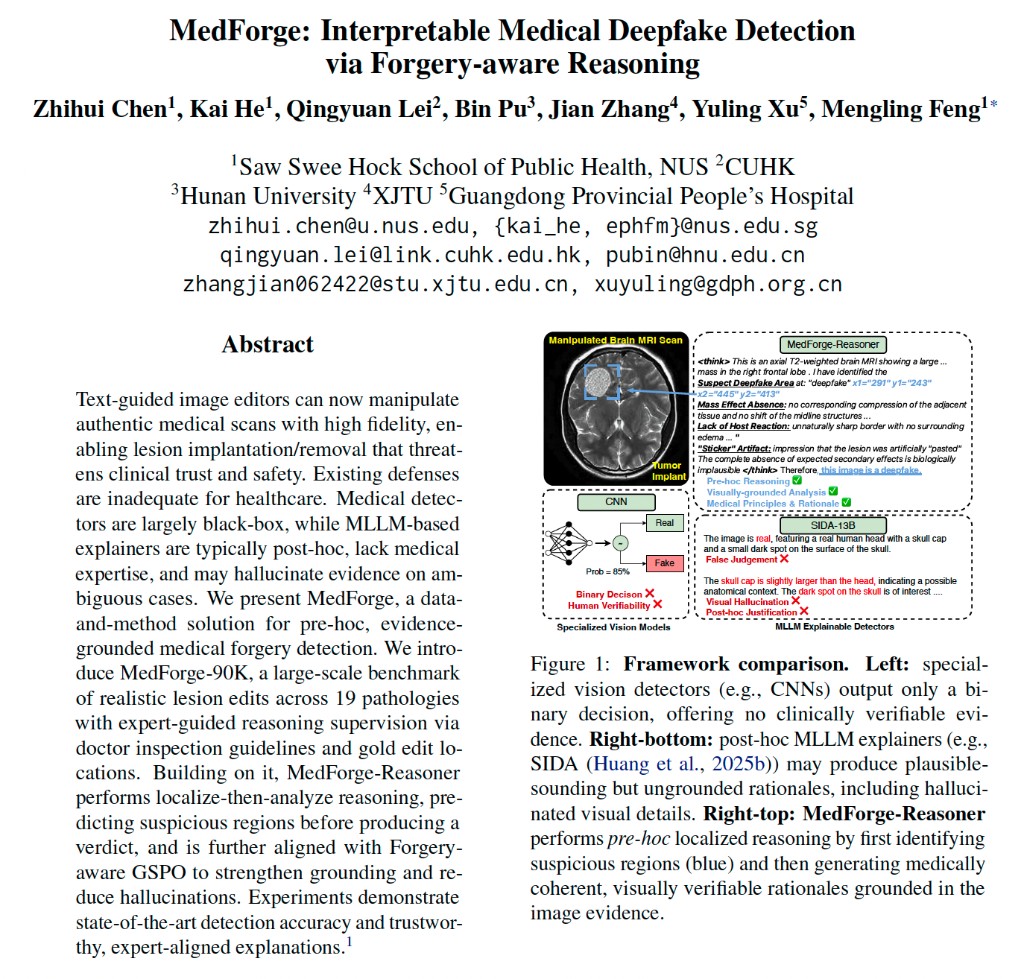

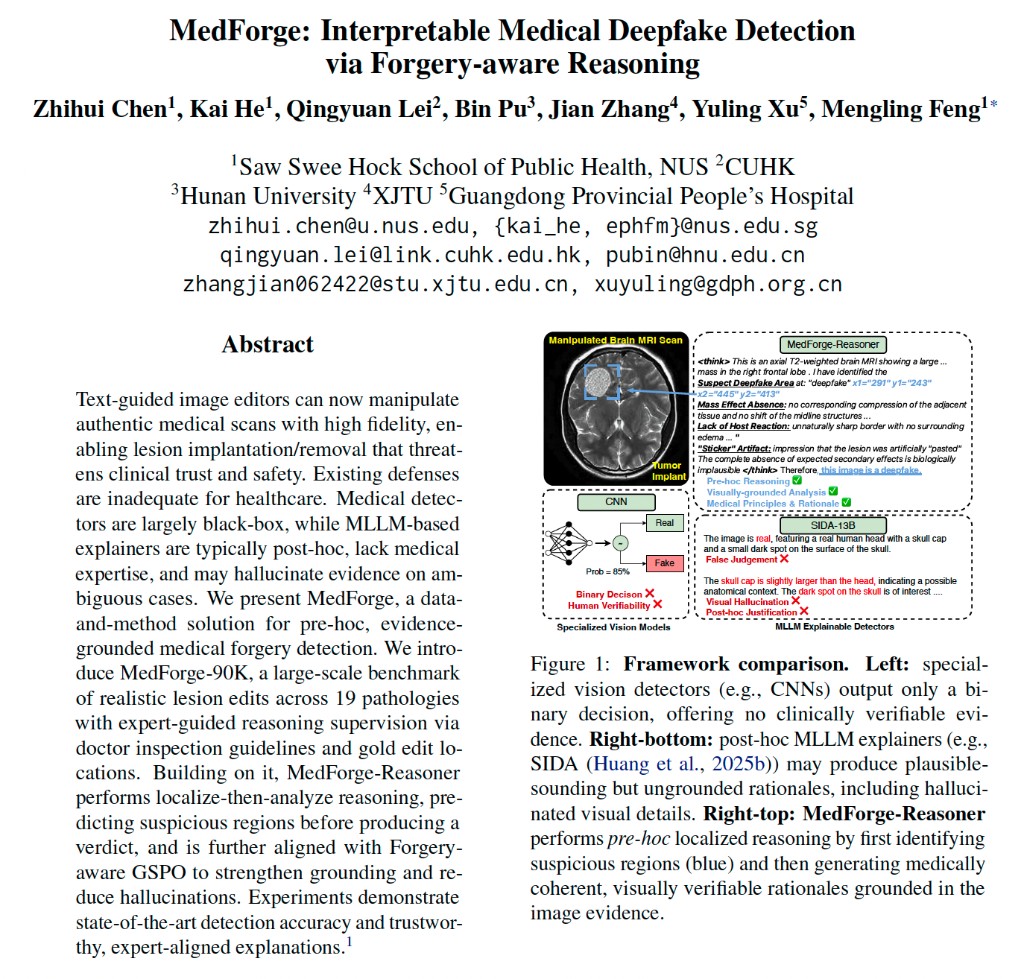

As generative models improve, medical deepfakes that implant or remove lesions while staying visually plausible pose growing risks to clinical safety and the integrity of medical evidence. Most prior work reduces detection to binary real-vs-fake scoring with little insight into where manipulation occurs or why. We present MedForge, an interpretable framework that introduces MedForge-90K—the first large-scale explainable medical deepfake dataset spanning CT, MRI, and X-ray, covering 19 lesion types with forgeries from 10 state-of-the-art deepfake models, each paired with expert-guided localization and clinical-grade explanations—and MedForge-Reasoner, a detector trained with a Localize-then-Analyze chain-of-thought paradigm and Forgery-aware GSPO reinforcement learning. MedForge-Reasoner achieves state-of-the-art detection while producing localized, verifiable medical rationales.

Zhihui Chen, Kai He, Qingyuan Lei, Bin Pu, Jian Zhang, Yuling Xu, Mengling Feng# (# corresponding author)

Annual Meeting of the Association for Computational Linguistics (ACL) 2026 Main Conference" data-zh=" 主会议"> Main Conference

As generative models improve, medical deepfakes that implant or remove lesions while staying visually plausible pose growing risks to clinical safety and the integrity of medical evidence. Most prior work reduces detection to binary real-vs-fake scoring with little insight into where manipulation occurs or why. We present MedForge, an interpretable framework that introduces MedForge-90K—the first large-scale explainable medical deepfake dataset spanning CT, MRI, and X-ray, covering 19 lesion types with forgeries from 10 state-of-the-art deepfake models, each paired with expert-guided localization and clinical-grade explanations—and MedForge-Reasoner, a detector trained with a Localize-then-Analyze chain-of-thought paradigm and Forgery-aware GSPO reinforcement learning. MedForge-Reasoner achieves state-of-the-art detection while producing localized, verifiable medical rationales.

Zhihui Chen, Kai He, Yucheng Huang, Yunxiao Zhu, Mengling Feng

Conference on Empirical Methods in Natural Language Processing (EMNLP) 2025 Main Conference" data-zh=" 主会议"> Main Conference

Detecting LLM-generated text in specialized and high-stakes domains like medicine and law is crucial for combating misinformation and ensuring authenticity. We propose DivScore, a zero-shot detection framework using normalized entropy-based scoring and domain knowledge distillation to robustly identify LLM-generated text in specialized domains. Experiments show that DivScore consistently outperforms state-of-the-art detectors, with 14.4% higher AUROC and 64.0% higher recall at 0.1% false positive rate threshold.

Zhihui Chen, Kai He, Yucheng Huang, Yunxiao Zhu, Mengling Feng

Conference on Empirical Methods in Natural Language Processing (EMNLP) 2025 Main Conference" data-zh=" 主会议"> Main Conference

Detecting LLM-generated text in specialized and high-stakes domains like medicine and law is crucial for combating misinformation and ensuring authenticity. We propose DivScore, a zero-shot detection framework using normalized entropy-based scoring and domain knowledge distillation to robustly identify LLM-generated text in specialized domains. Experiments show that DivScore consistently outperforms state-of-the-art detectors, with 14.4% higher AUROC and 64.0% higher recall at 0.1% false positive rate threshold.

Zhihui Chen, et al.

arXiv preprint 2025

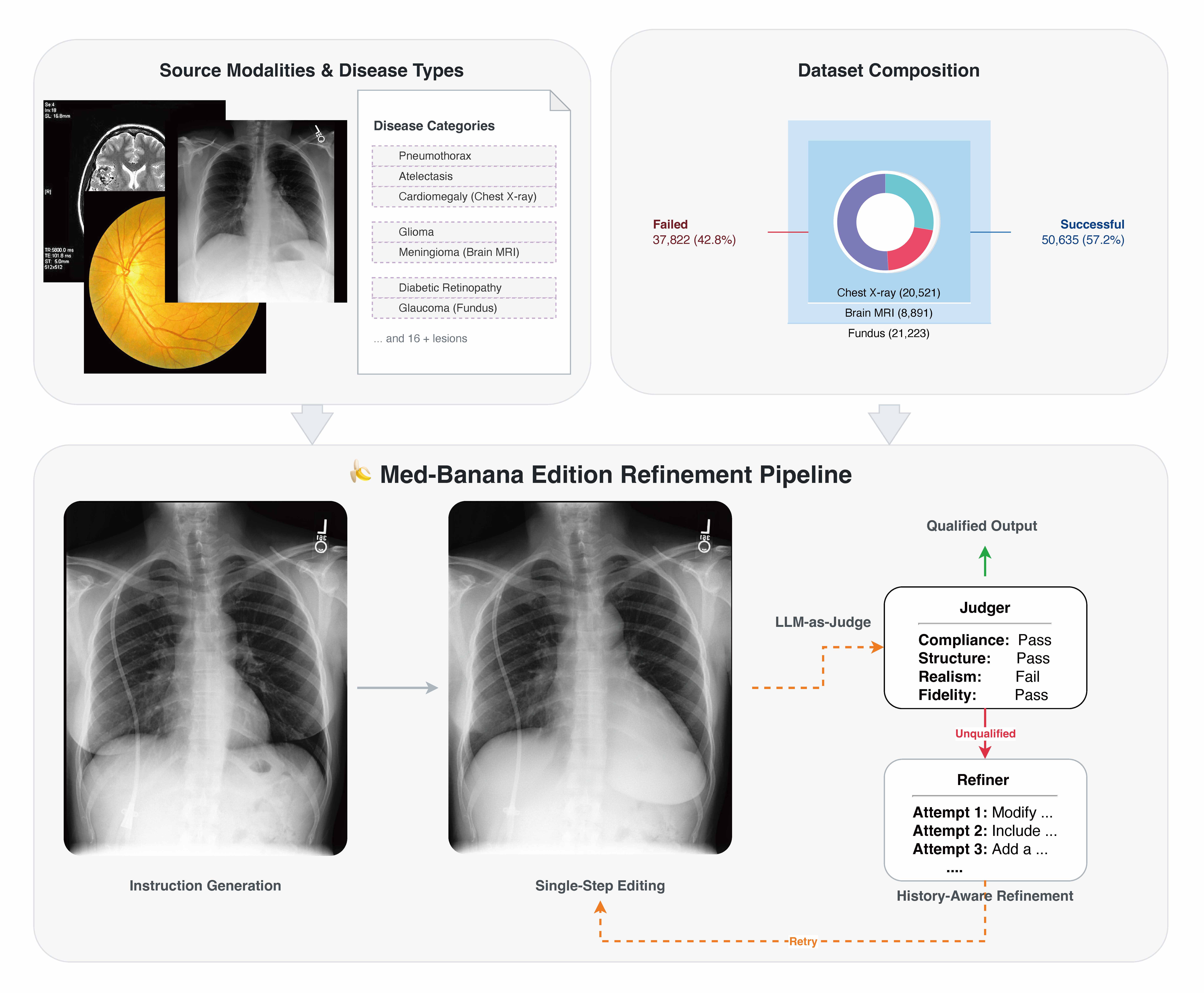

Recent advances in multimodal large language models have enabled remarkable medical image editing capabilities. However, the research community's progress remains constrained by the absence of large-scale, high-quality, and openly accessible datasets built specifically for medical image editing with strict anatomical and clinical constraints. We introduce Med-Banana-50K, a comprehensive 50K-image dataset for instruction-based medical image editing spanning three modalities (chest X-ray, brain MRI, fundus photography) and 23 disease types. Our dataset is constructed by leveraging Gemini-2.5-Flash-Image to generate bidirectional edits (lesion addition and removal) from real medical images. What distinguishes Med-Banana-50K from general-domain editing datasets is our systematic approach to medical quality control: we employ LLM-as-Judge with a medically grounded rubric and history-aware iterative refinement up to five rounds.

Zhihui Chen, et al.

arXiv preprint 2025

Recent advances in multimodal large language models have enabled remarkable medical image editing capabilities. However, the research community's progress remains constrained by the absence of large-scale, high-quality, and openly accessible datasets built specifically for medical image editing with strict anatomical and clinical constraints. We introduce Med-Banana-50K, a comprehensive 50K-image dataset for instruction-based medical image editing spanning three modalities (chest X-ray, brain MRI, fundus photography) and 23 disease types. Our dataset is constructed by leveraging Gemini-2.5-Flash-Image to generate bidirectional edits (lesion addition and removal) from real medical images. What distinguishes Med-Banana-50K from general-domain editing datasets is our systematic approach to medical quality control: we employ LLM-as-Judge with a medically grounded rubric and history-aware iterative refinement up to five rounds.

Detects medically plausible image forgeries with localized reasoning, using the MedForge-90K benchmark and a Localize-then-Analyze detector. 通过 MedForge-90K 基准与先定位再分析的推理式检测器,对医学影像伪造进行定位、判别和解释。

A robust detector for medicine and law that uses normalized entropy scoring and domain knowledge distillation without retraining on new domains. 面向医疗与法律等高风险领域,在无需新领域训练数据的前提下,稳健检测大模型生成文本。

http://".

However, if the current repository name is intended, you can ignore this message by removing "{% include widgets/debug_repo_name.html %}" in index.html.

baseurl ("_config.yml.

baseurl in _config.yml to "